Machine learning and AI are powerful tools that can dramatically reduce costs, improve processes, and increase productivity for your organization. But, these tools are not without risk. Apple made headlines in November of 2019 when Twitter user @dhh (DHH) posted a thread about alleged gender bias in the Apple Card credit algorithm.

Apple launched a new credit card ‘The Apple Card’ and positioned transparency as its key feature. However, when DHH and his wife applied for cards, he received a credit limit twenty times higher than hers despite her credit score being higher than his.

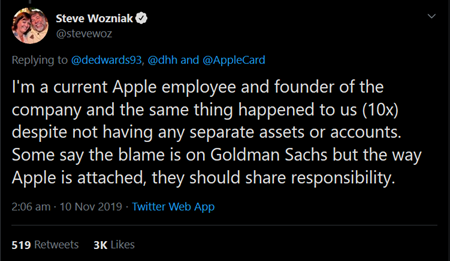

DHH’s thread went viral very quickly as other Apple customers chimed in with similar stories, including one from Apple co-founder Steve Wozniak.

Wozniak and his wife share all of their assets and accounts, yet he still received a credit limit ten times higher than she did.

Representatives for the tech giant said, “It’s just the algorithm.”

The problem here, aside from how Apple customer service representatives handled the situation, is that employees could not explain why the algorithm seemed to exhibit bias against women. One representative reportedly claimed that Apple employees could not examine, access, or check the algorithm.

Cases like these remind us that algorithms are susceptible to the same biases as their creators. Algorithms are a series of step by step instructions built and designed by fallible human beings.

Let’s say you want to design an algorithm to give each of your customers a value score. To help the software learn, you’ll need to feed it data. However, as soon as you select the data to feed into the algorithm, you explicitly choose what you will and will not include – thereby introducing bias into the system.

Next, let’s consider the team building your tools and programs. Despite recent efforts to increase diversity in IT, programming is still heavily dominated by men, especially white, straight men. If your team doesn’t meaningfully include women, people of colour, and gender diverse people, you’ve introduced bias.

Without noticing, your organization could build a system that systematically creates unfair outcomes for people who look, sound, or act differently than your team. The greater the risk of bias, the greater your risk of alienating customers, creating a PR nightmare, and facing stiff fines and involvement from regulatory bodies and the government, not to mention lost revenue.

Whenever you introduce AI and machine learning into your organization you need to start with the human element first. Not just in the diversity and makeup of your teams, and not just in testing and re-testing new tools to ensure they work fairly and properly, but allowing and encouraging your staff to continuously evaluate tools and raise concerns. If your team isn’t empowered to speak up, the organization is at risk.

As the decision-maker, you also need to develop the confidence to know which questions to ask and when. As you make decisions about new tools and techniques, you need to know how to identify opportunities but also recognize limitations and spot potential risks with new technology.

Rotman knows that managing disruptive technologies requires a multi-faceted approach to staying ahead. We’ve designed a suite of programs to help you adapt to a changing world. Consider our Inclusion by Design program to ensure your organizations are inclusive, enrol in our Enterprise Risk Management program to develop strategies to mitigate cyber risk and stay tuned for our Managing with Machines program coming in Spring 2020.